Elonara Social is self-regulating. On Elonara, communities take shape around who people actually want to be, and who they want to hang out with. There’s no moderator with a god complex hovering over every post, no algorithm quietly deciding who should see what. Just people, choosing who they connect with—and who they don’t.

What “self-regulating” actually means

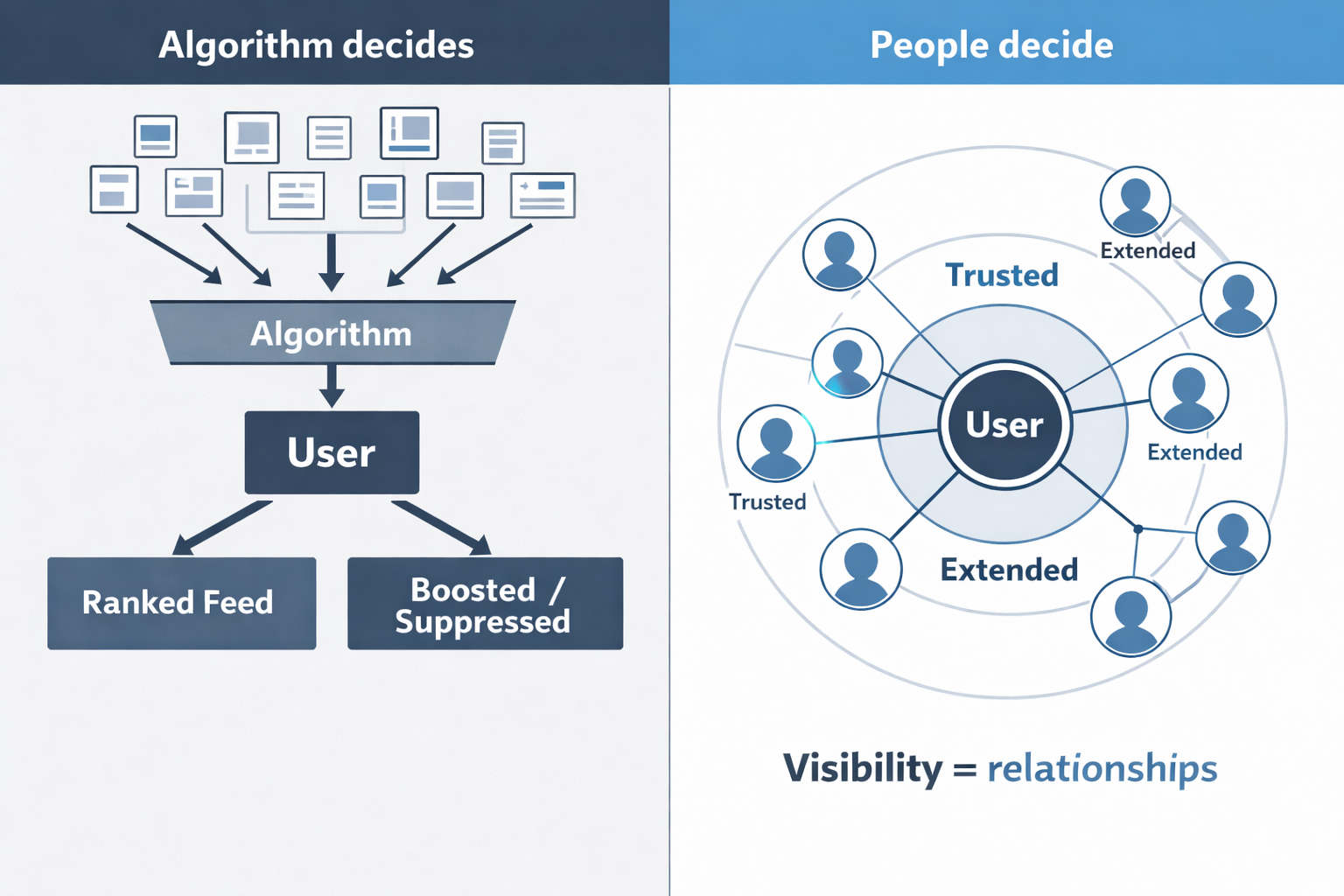

On Elonara, visibility is not controlled by a feed algorithm. It is controlled by people.

Relationships are structured through circles. These circles determine what you see and who sees you. If someone no longer fits a community—whether because of tone, behavior, or intent—people don’t need to report them or escalate anything.

They simply move on.

Over time, that has a predictable effect. Reach narrows. Conversations stop. The system doesn’t remove the person. The community does, indirectly, through disengagement.

This is how online communities can function without moderators or algorithmic enforcement. Not by removing content, but by controlling visibility through human relationships.

How communities form without moderation

You can start a space for people who share your sense of humor, your intensity, your way of talking. It might be sharp, it might be gentle. Whatever it is, it will attract people like you.

This is not a theory. It’s how social behavior works when it isn’t overridden by ranking systems or enforced guidelines.

If you create a community with a clear tone, people who match it will stay. People who don’t will leave—or be left behind.

What happens when someone doesn’t fit

But when someone crosses into a community that doesn’t match their tone, and keeps pushing, they might eventually find themselves alone. Not because a moderator banned them. Because everyone else did. One by one.

A concrete example

Imagine a small, tight conversation space built around thoughtful discussion. Someone joins and consistently pushes it toward hostility.

On a traditional platform:

- The content might be boosted if it drives engagement

- Or it might trigger moderation if reported

On a self-regulating platform:

- People simply disconnect

- The person’s visibility shrinks

- The conversation continues without them

The system doesn’t intervene. It doesn’t need to.

That’s not censorship. That’s consequence.

You get the community you create

If you want to build a community where everyone shares your attitude, go for it.

You can be mean and make a mean community, and all the mean people can join and be happy mean people together. That’s fine. That’s what freedom looks like.

If you want to build a community where everyone’s kind, go for it. You can be kind and make a kind community, and all the kind people can join and be happy kind people together. That’s fine. That’s what freedom looks like.

Why silos are not a failure

Most platforms treat fragmentation as a problem. They try to force shared space, unified rules, and universal visibility.

But real communities don’t work that way.

Different groups have different norms. Different tolerances. Different ways of communicating. Trying to flatten that into a single system creates constant friction.

Silos are not a breakdown. They are a natural outcome of people organizing themselves.

In a self-regulating social network, silos are how coherence emerges.

Start where you already are

You don’t need permission to build a space that fits you.

Start a conversation.

Invite the people you actually want to hear from.

Let the shape of it emerge.

That’s how communities form.

Not by design documents or moderation policies.

By people deciding, in real time, who belongs in the room.

Either way, you get the community you create.

And that’s OK.

That’s how it’s supposed to work.

Leave a Reply

You must be logged in to post a comment.